Storytelling

A few days ago, I had some free time and was browsing .NET open-source libraries on GitHub. I came across some libraries related to web scraping, so I joined a QQ group. In the group, I saw the seniors discussing: "The better you scrape, the sooner you get locked up." So I decided to make something related to web scraping myself, but scraping is dangerous, and I didn't dare to casually scrape other people's websites. So I found a friend and used his website for practice!

Practice

For .NET, there are quite a few libraries related to web scraping, so I chose HtmlAgilityPack to do a scraping exercise.

So what is web scraping?

In short:

The basic process of web scraping is: download data (send an HTTP request and get back the response) -> parse the returned text (which could be plain text, JSON, HTML) -> store the parsed data.

When learning a framework, we always start with its official documentation. Address: https://html-agility-pack.net/

Html Parser

- From File (load an HTML document from a file)

- From String (load an HTML document from a specified string)

- From Web (get an HTML document from an Internet resource)

- From Browser (get an HTML document from a WebBrowser)

So I chose From Web to parse our HTML document. The code is as follows:

var html = @"https://dotnet9.com/";

HtmlWeb web = new HtmlWeb();

var htmlDoc = web.Load(html);

Now that we have the HTML document, we naturally need to parse its content.

Html Selector

- SelectNodes() (selects a list of nodes matching an XPath expression)

- SelectSingleNode(String) (selects the first XmlNode matching an XPath expression)

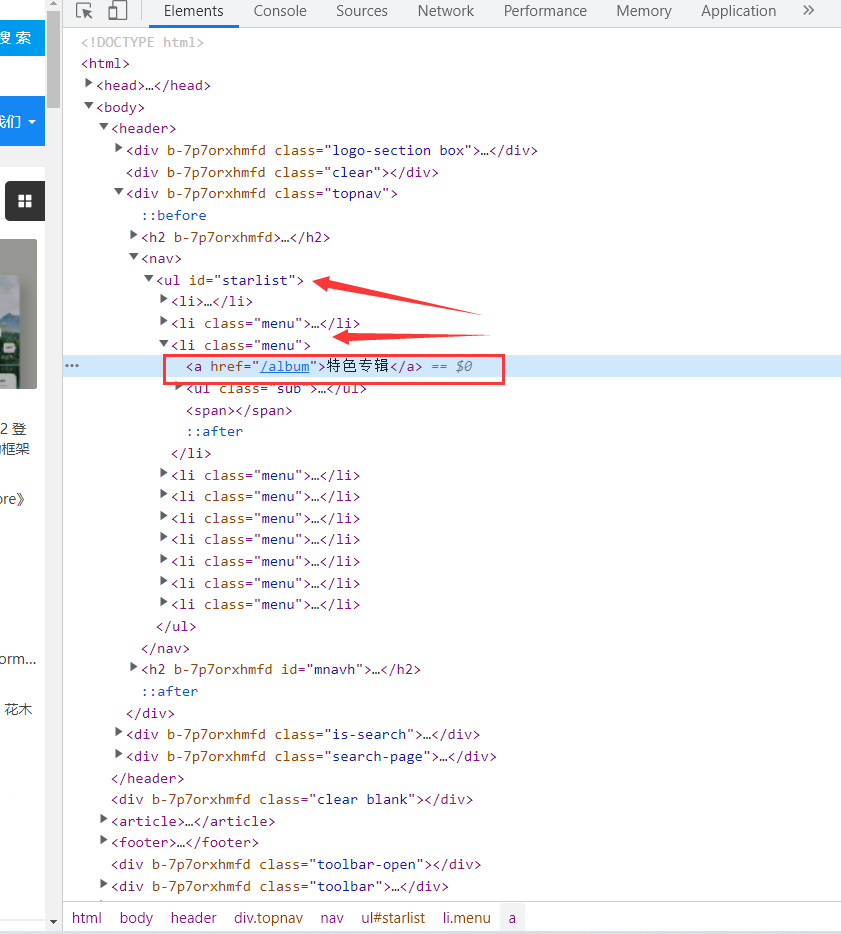

Open the website and find the part we want to scrape. Today we will scrape all the articles under the Featured Albums section of the site.

Open the debug mode. We can see that Featured Albums is an a tag. Let's check the parent element of this tag: it's li, and the parent of li is ul. So we can get this node.

var allNodes = htmlDoc.DocumentNode.SelectNodes("//ul[@id='starlist']//li[@class='menu']");

Of course, we can also use XPath to get the node content.

var singNodes = htmlDoc.DocumentNode.SelectSingleNode("/html[1]/body[1]/header[1]/div[3]/nav[1]/ul[1]/li[3]//ul[1]")

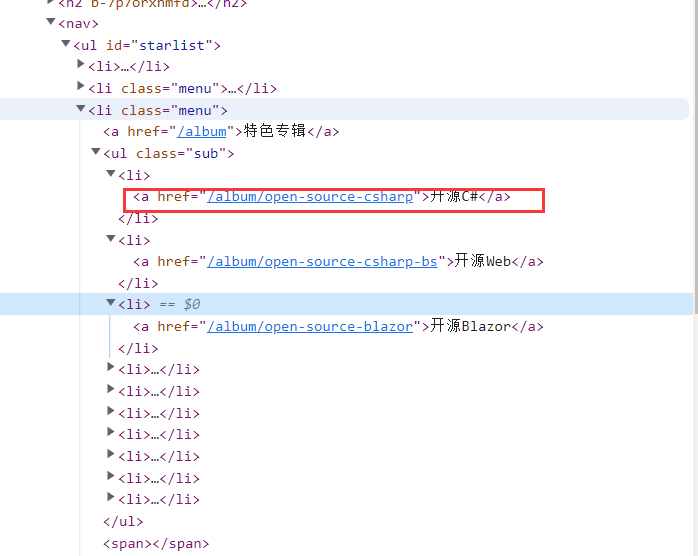

Now let's get the URLs of the submenus under this Featured Album. It turns out that the href attribute of the a tag specifies the target address of the link. So the first step is to get all the links under this submenu.

var singNodes = htmlDoc.DocumentNode.SelectSingleNode("/html[1]/body[1]/header[1]/div[3]/nav[1]/ul[1]/li[3]//ul[1]")

.ChildNodes.Where(o => o.Name=="li");

List<string> lstUrl = new List<string>();

foreach (var item in singNodes)

{

var aNodes = item.ChildNodes.Where(o => o.Name == "a").First();

string url = aNodes.Attributes["href"].Value;

lstUrl.Add(url);

}

Open any submenu. You can see the article title, description, image, etc. This is exactly the content we want. The analysis method is the same as before. The code is as follows:

foreach (var item in lstUrl)

{

htmlDoc = web.Load("https://dotnet9.com"+item);

var resultNodes = htmlDoc.DocumentNode.SelectSingleNode("//div[@class='pics-list-box whitebg']//ul")

.ChildNodes.Where(o=>o.Name=="li");

foreach (var itemResultNodes in resultNodes)

{

WebData webData = new WebData();

var aNodes = itemResultNodes.ChildNodes.Where(o => o.Name == "a").First();

webData.Url= aNodes.Attributes["href"].Value;

webData.Title = aNodes.ChildNodes["h2"].InnerText;

webData.Desc = aNodes.ChildNodes["p"].InnerText;

webData.Img = aNodes.ChildNodes["i"].ChildNodes["img"].Attributes["src"].Value;

Console.WriteLine($"Title:{webData.Title}-Description:{webData.Desc}-Img:{webData.Img}-{webData.Url}\r\n");

}

}

In this way, we can get what we want. Run the code, and our first web scraper is successful.

Summary

I wrote my first web scraper on a whim. If anyone has a better solution, you're welcome to share. Shared joy is a double joy. That's all for this post. I hope it helps you.

Finally, a disclaimer: Overall, technology itself is innocent. But if you use it to scrape others' privacy or commercial data, you are disregarding the law. Please stick to your bottom line!